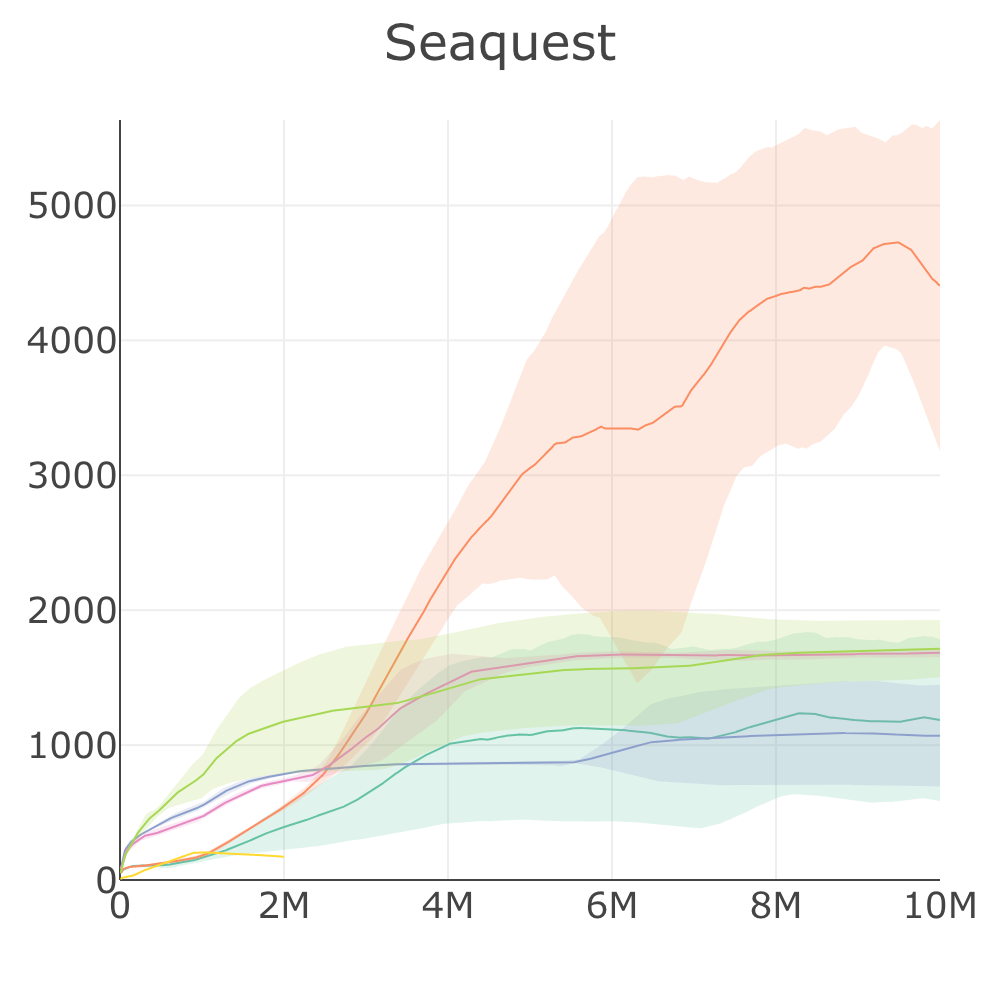

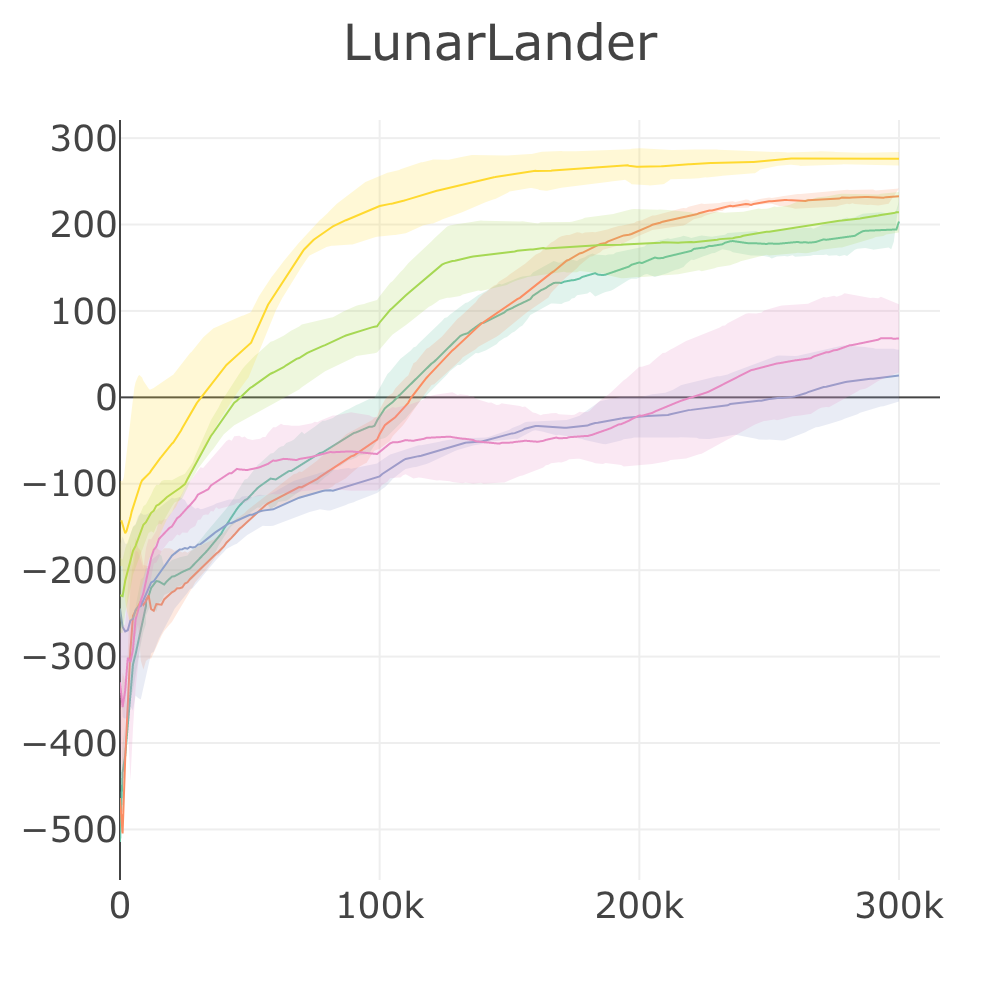

# Discrete Environment Benchmark

## :first\_place: Discrete Environment Benchmark Result

* [Upload PR #427](https://github.com/kengz/SLM-Lab/pull/427)

* [Dropbox data](https://www.dropbox.com/s/az4vncwwktyotol/benchmark_discrete_2019_09.zip?dl=0)

| Env. \ Alg. | DQN | DDQN+PER | A2C (GAE) | A2C (n-step) | PPO | SAC |

| -------------: | :---: | :------: | :-------: | :----------: | :-------: | :-----: |

| Breakout | 80.88 | 182 | 377 | 398 | **443** | 3.51\* |

| Pong | 18.48 | 20.5 | 19.31 | 19.56 | **20.58** | 19.87\* |

| Qbert | 5494 | 11426 | 12405 | **13590** | 13460 | 923\* |

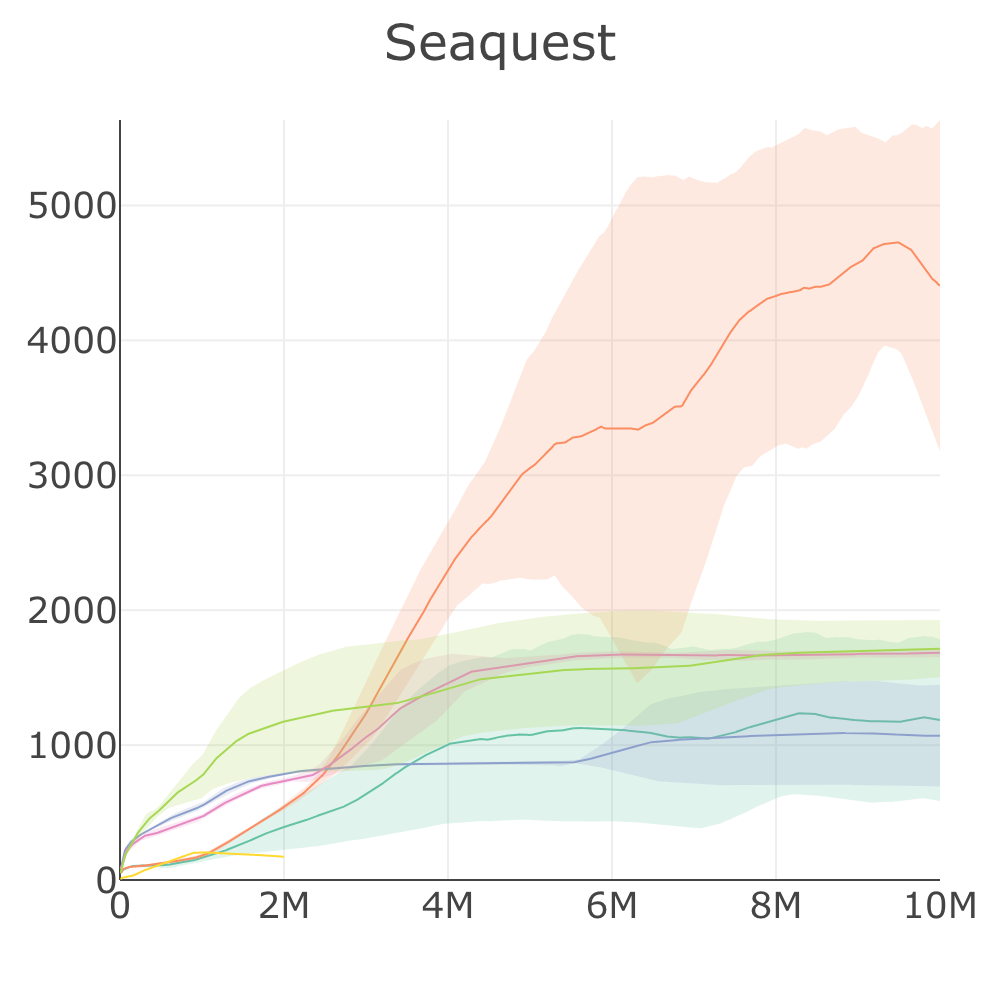

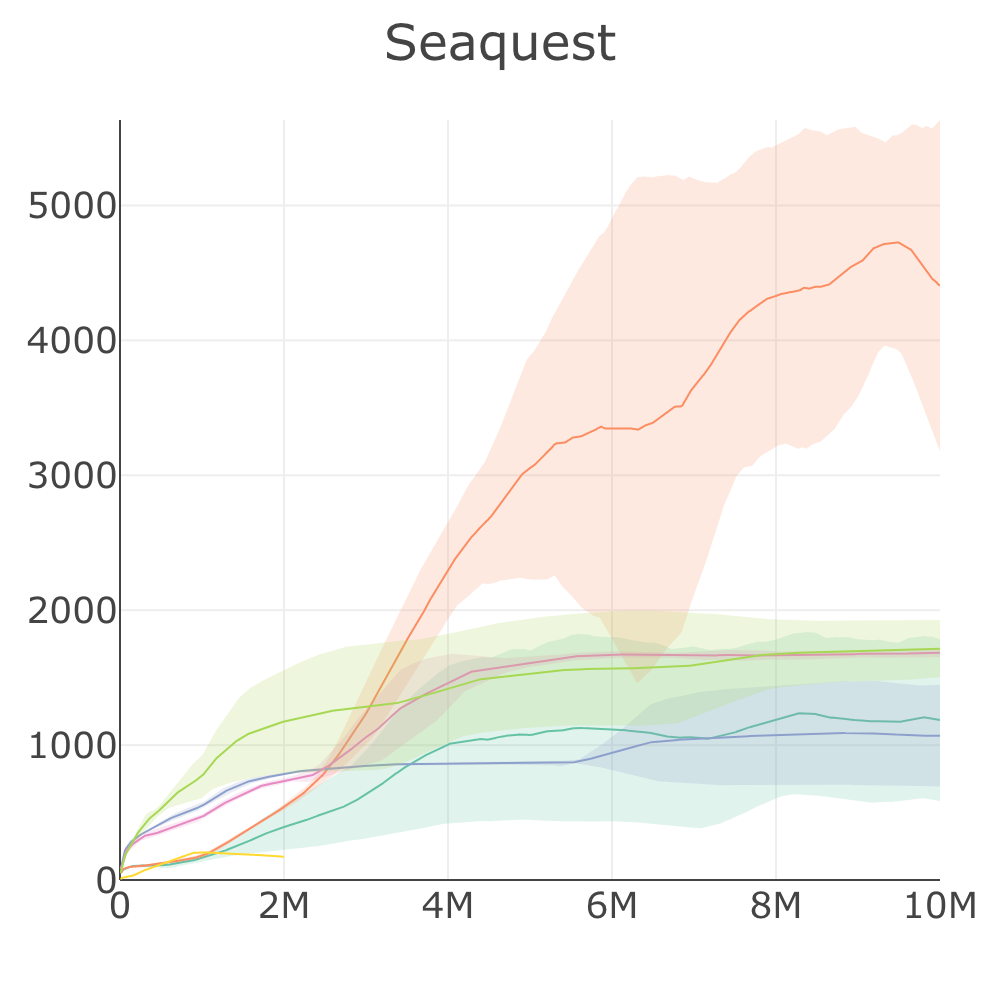

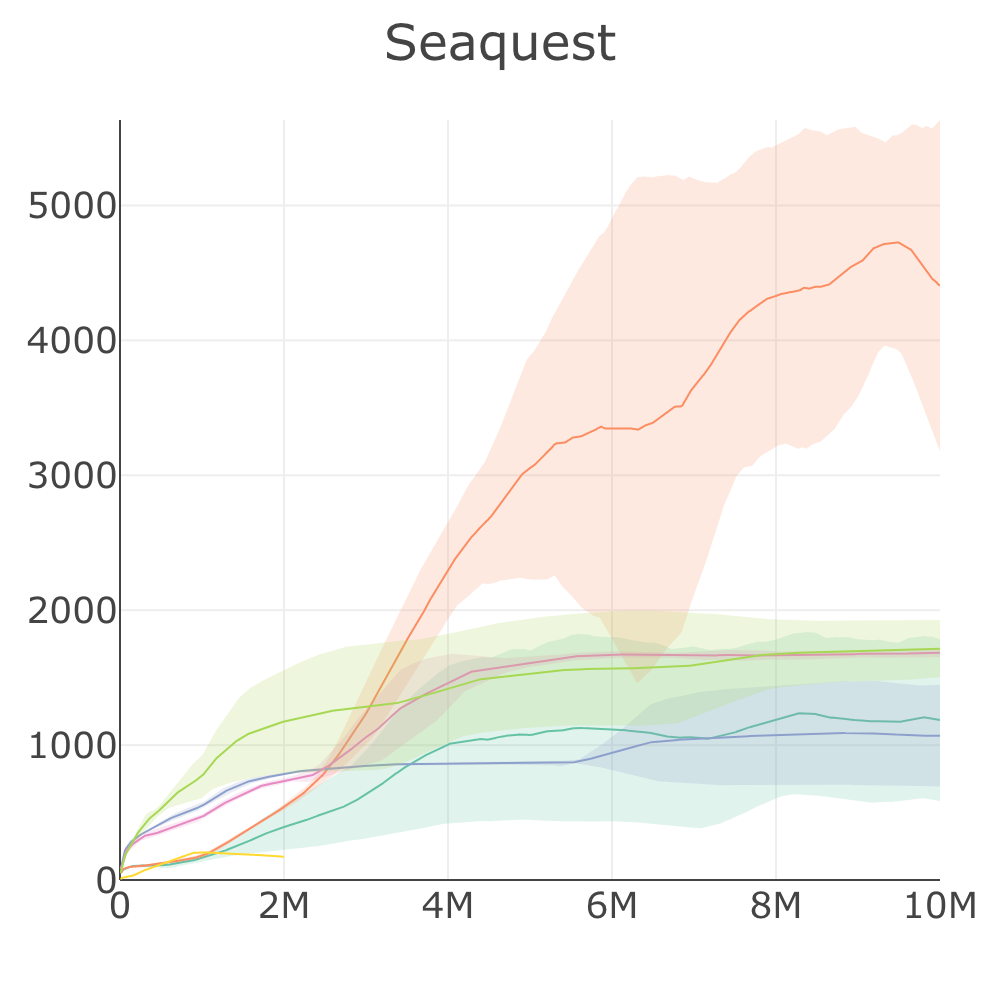

| Seaquest | 1185 | **4405** | 1070 | 1684 | 1715 | 171\* |

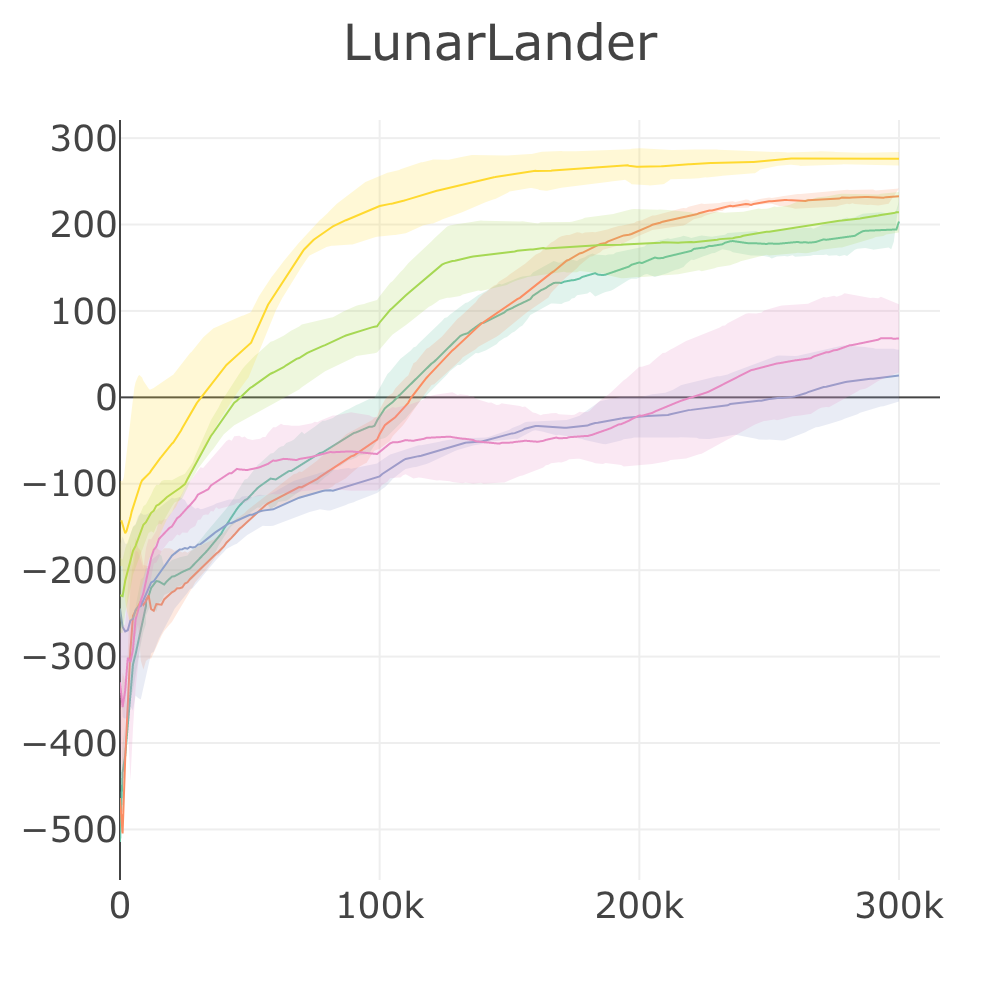

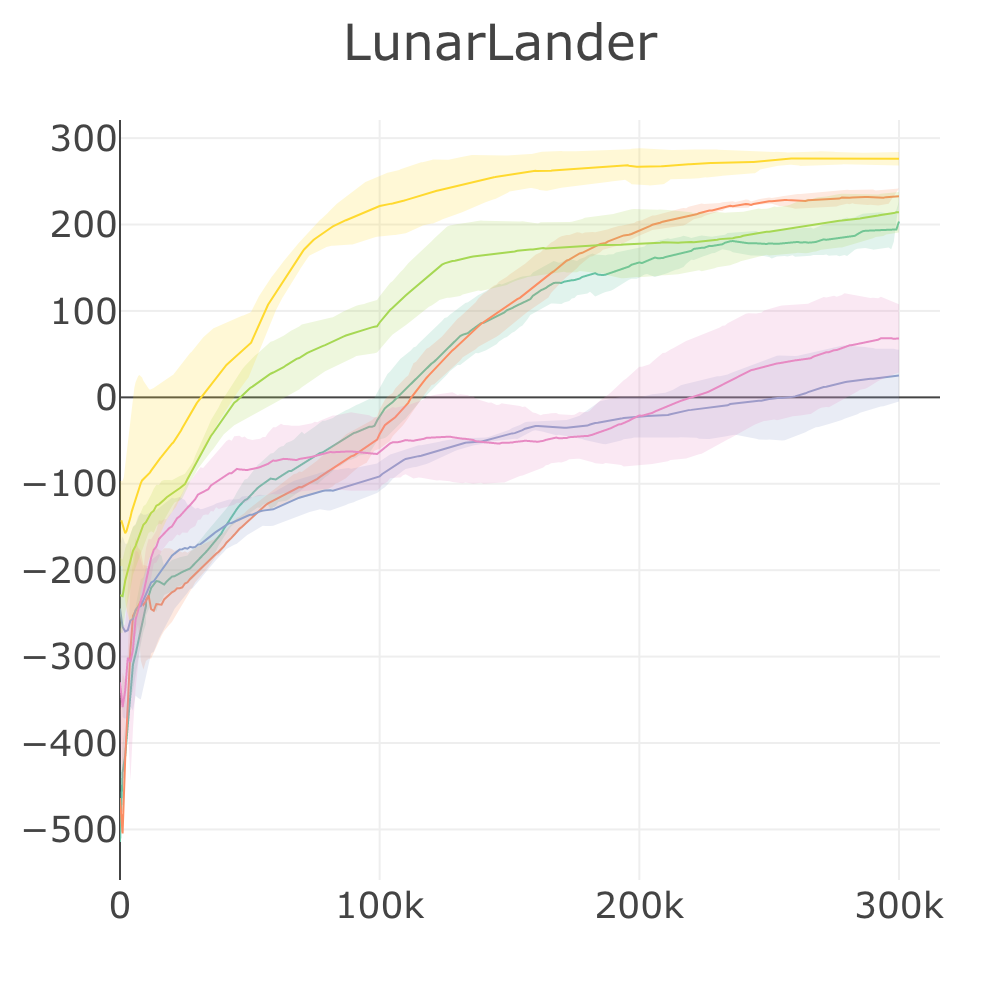

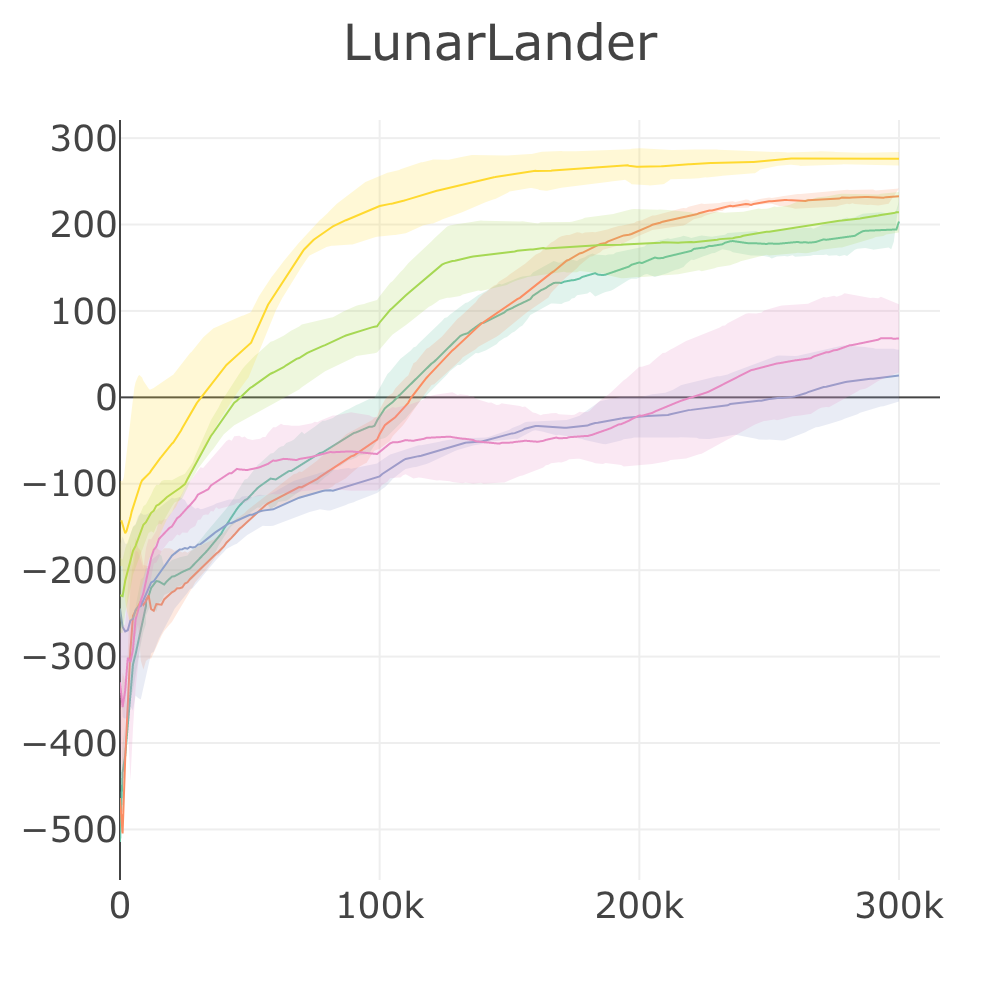

| LunarLander | 192 | 233 | 25.21 | 68.23 | 214 | **276** |

| UnityHallway | -0.32 | 0.27 | 0.08 | -0.96 | **0.73** | 0.01 |

| UnityPushBlock | 4.88 | 4.93 | 4.68 | 4.93 | **4.97** | -0.70 |

> Episode score at the end of training attained by SLM Lab implementations on discrete-action control problems. Reported episode scores are the average over the last 100 checkpoints, and then averaged over 4 Sessions. A Random baseline with score averaged over 100 episodes is included. Results marked with `*` were trained using the hybrid synchronous/asynchronous version of SAC to parallelize and speed up training time. For SAC, Breakout, Pong and Seaquest were trained for 2M frames instead of 10M frames.

>

> For the full Atari benchmark, see [Atari Benchmark](https://github.com/kengz/SLM-Lab/blob/benchmark/BENCHMARK.md#atari-benchmark)

## :chart\_with\_upwards\_trend: Discrete Environment Benchmark Result Plots

#### Plot Legend

---

# Agent Instructions: Querying This Documentation

If you need additional information that is not directly available in this page, you can query the documentation dynamically by asking a question.

Perform an HTTP GET request on the current page URL with the `ask` query parameter:

```

GET https://slm-lab.gitbook.io/slm-lab/master/benchmark-results/discrete-benchmark.md?ask=

```

The question should be specific, self-contained, and written in natural language.

The response will contain a direct answer to the question and relevant excerpts and sources from the documentation.

Use this mechanism when the answer is not explicitly present in the current page, you need clarification or additional context, or you want to retrieve related documentation sections.

---

# Agent Instructions: Querying This Documentation

If you need additional information that is not directly available in this page, you can query the documentation dynamically by asking a question.

Perform an HTTP GET request on the current page URL with the `ask` query parameter:

```

GET https://slm-lab.gitbook.io/slm-lab/master/benchmark-results/discrete-benchmark.md?ask=

```

The question should be specific, self-contained, and written in natural language.

The response will contain a direct answer to the question and relevant excerpts and sources from the documentation.

Use this mechanism when the answer is not explicitly present in the current page, you need clarification or additional context, or you want to retrieve related documentation sections.

---

# Agent Instructions: Querying This Documentation

If you need additional information that is not directly available in this page, you can query the documentation dynamically by asking a question.

Perform an HTTP GET request on the current page URL with the `ask` query parameter:

```

GET https://slm-lab.gitbook.io/slm-lab/master/benchmark-results/discrete-benchmark.md?ask=

---

# Agent Instructions: Querying This Documentation

If you need additional information that is not directly available in this page, you can query the documentation dynamically by asking a question.

Perform an HTTP GET request on the current page URL with the `ask` query parameter:

```

GET https://slm-lab.gitbook.io/slm-lab/master/benchmark-results/discrete-benchmark.md?ask=